Compendium Two

Transformations & Generations: Artificial Intelligence + Ecology

March 2026

Ruminating

How do I engage with nature?

I often look at the bucolic green perfection of Japanese Aquascapes on Instagram. "That isn't REAL nature", I think to myself.

There was a jar of algae in my room in India. For five long years, dried leaves decomposed into a thin crust of algae on a rock. I never trimmed it, I never added fertilizers. I forgot about it, for years at a stretch.

Algae in a jar. Nature, perhaps. circa 2025

Artificial Intelligence has a tendency to create a massive Ouroboros-like feedback loop; when prompted to generate nature, it draws up the most common (and sometimes, wrong) idea of nature, based on what general users of the service generate.

My approach to using AI models in generating any visual is (sometimes) a brute force, "throw-everything-at-the-wall-see-what-sticks" method.

Mostly, however, it is a recursive, repetitive, circular fine-tuning of what I want the model to generate for me.

My own mini-Ouroboros.

Using specific images to drive the generation of alternative heightmaps, with a recursive process: an example of how I use multiple passes of generations to get the result I want. (Project: Noise Geographies)

Sometimes, my workflows involve stringing together multiple passes at image generation, Style-training, prompting and generations. Even while using tools like Fuser: the Styles are trained on images generated through combinations and permutations of satellite images on Midjourney. (Project: The Real and the Unreal)

Base datasets

Creating imagery with open/general use models like Midjourney or Flux relies on open, generalized datasets such as LAION-400MILLION.

One of my early processes included building novel datasets, or image "references", which can drive my generations in a particular aesthetic direction. Using images with multispectral or hyperspectral baselines can create lead to interesting visual generations.

I generally use MIDJOURNEY to generate quick tests which are fine-tuned through moodboards and styles. These "primary" generations are then used to train a LoRA or within ComfyUI and Fuser as a base image.

Multispectral Imagery

Testing image generations using the raw, processed Red/Green/NIR base image (using Midjourney v7)

IR Filter Photography

I use a 720 nanometer Infrared filter and a DSLR to create specialized photographs, which I then use to train a style or LoRA.

Satellite Imagery

Satellite images extracted from GIS (along with DEM/other data) to train image models on Orthophoto aesthetics (Project: Superfund Superbloom)

Test generations with multiple image sets including satellite imagery + IR Photography. (Using Midjourney v7)

The image generation process undergoes several iterations.

For every twenty images, one may be ideal.

This is combined with other ideal images to train a style or LoRA.

The resultant images from the style generations (with specific prompts) are used as a reference or retexture.

Finally, multiple images are collaged into a single canvas.

Project

The Zone: Chimeras in Superfundland (2025)

Capstone project for the Synthetic Landscapes Program at SCI-Arc

How do we face extreme toxicity?

How do we stir ecological emergence into exceedingly uninhabitable sites?

Using algorithmic frameworks and containment structures around a toxic chemical plant designated a "superfund" site.

An essay about this project was published in Ecological Design Collective's publication "Litter: Antidotes to Toxicity" in 2026. To read more, click the link below.

Using a "circle-packing" algorithm to decimate the superfund site: a meso-scale "field of toxic blooms", rendered into a series of structures, craters, debris fields, and remediation zones; categorized by scale. (This image was created using ArcGIS, python in Claude Code, and Rhino)

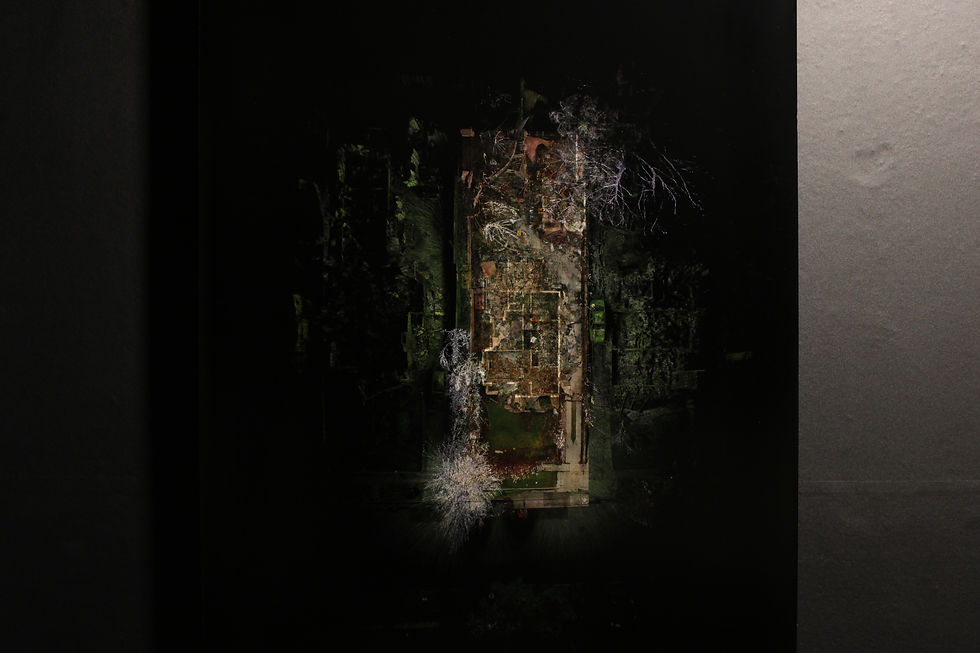

Aerial Imagery of The Zone

Imagining elements of "The Zone": Generated orthophotos (mimicking satellite or aerial photographs) of each Class of structure within the zone. These images were created using a recursive process, using Midjourney V7, Rhino, Midjourney Retexture, and Photoshop.

Base structure modelled using parametric tools on Rhino

Midjourney V7 used to retexture the model

Photoshop used to post-process the retextured model

An eerie lake forms with pooled rainwater and urban debris, with the massive structure creaking ominously as it tremors against the machinery.

Scenes from "The Zone", imagining the urban environment within the containment walls. (Created using Blender, Midjourney, and Unreal Engine with AI-generated audio)

Project

Disturbed (2025)

Disturb:

To agitate, to mix, to break up.

This project is based in Central Valley, CA.

How do you agitate farmland into a wetland ecosystem?

The speculative future of the farm; a bird's eye view.

Langer Farms, Central Valley, in 2025, satellite imagery

Future evolution of Langer Farms, from a dryland ecosystem to a water mixing facility. Created using QGIS, Midjourney v6, and Photoshop

Surfaces and Volumes

Bruno Latour's essay around the Actor Network Theory (ANT) talks about the "rhizome" as a replacement for a planar Cartesian surface, where, rather than subdividing land by area (a surface metric) we can study ecologies in terms of interaction networks (rhizome). To create these interactions between water and organisms, I render the farmland into a series of volumes within which these interactions take place.

The Cartesian grid is subdivided into units, each a power of -2 under the 1 mile Cartesian unit.

Cultures of bacteria, archaea, protozoans are "introduced" to the farm runoff, forming microbial trophic pyramids which "use up" the excess nutrients in the water. These trophic systems also bring in macro-faunal species such as migrating birds, mammals and amphibians to this pseudo-oasis within the Central Valley.

A longer evolution of Langer Farms, from a water mixing facility to a riparian ecosystem. Created using QGIS, Midjourney v6, and Photoshop

The process for creating these satellite images involves creating training data of various ecosystems through GIS images, combining and generating initial images, and collaging multiple images on the base image.

Scenes from the Biotic Water Treatment Facility in Central Valley, CA: The thrumming pumps moving water and microbial cultures through a network of pipes, creating lurid hues of water with various bacterial and algal blooms (created using Rhino, Houdini, Unreal Engine, and audio effects from Eleven Labs)

Project

The Microcosm

Created in collaboration with Marti Vera Marsal, Ahmed Yakout, and Rudy Argote.

Part of the Synthetic Ecologies Seminar, Synthetic Landscapes.

The creation of a microcosm to study new ecological concepts.

This "terrarium" is a synthetic water body. The intent is to introduce microbes and algal cultures, documenting how the water changes through a timelapse recording of the terrarium over various stages of introductions, disturbances, and simulated ecological shocks.

Organic deposits line the 3D-printed "introduction" apparatus, a truncated pyramid. Zoom in to see the details.

Between February and September 2025, this experiment ran (chaotically) with various "introductions" - each introduction leaving a trail or depositional layer of organic detritus in it's wake.

Representation of the microcosm: I used a "systems diagram" over time to map the various inputs, outputs, and conditions of the microcosm. This accounts for all the mechanical, static, environmental, and biotic components of the microcosm. (Authored by Kahin Vasi)

Samples of the detritus deposits in the microcosm show the presence of nematodes, various species of cyanobacteria and filamentous algae, fungal hyphae, paramecium and other zootrophic multicellular organisms. The cyanobacteria culture (pictured top centre) was developed from a water filter sample.

Our "aesthetic" sensibilities towards nature come from a notion of control; using chemical baths and additives to stem the growth of algae and fungi.

This experiment embraces the descent into ecological entropy as every element of the base breaks down and is layered with a crust of detritus.

When the terrarium dried out completely (this process can be seen towards the end of the timelapse), it left behind a crust of organic matter of the surface of the base, which - although coated with a white waterproof epoxy - soon became the substrate for fungal growth.

Experiments

Microhabitats

I created terrariums, aquariums, and microhabitats professionally for five years, something I still practice as a hobby today.

Gradually, my work on creating biotically sound enclosures (replete with flora and microfauna) slowly turned into a series of long-term experiments, studying the decay of organic matter over long time periods. This expanded to fungi and algae habitats, as well as a habitat dedicated to observing slime molds.

The Slime Mold Microhabitat (2024)

The slime mold habitat: slime mold was found on a branch from a site visit in 2024; this is the central branch (with visible mushrooms). The culture spread onto a wet paper towel which was used to transfer the slime mold to it's habitat.

The slime mold can be seen growing across various surfaces; all surfaces were sprayed with a bacteria-rich biofilm from aquariums, making it easier for the physarum to colonize the surfaces. The structure of the slime mold growth is visible clearly as it extends up the surface of the habitat.

Detritivore Microhabitats (2019 - 2025, ongoing)

Documenting cycles of decay in a detritivore microhabitat with wild isopods (species from India), recording the daily progression of the decomposition of shredded carrots as feed stock. These cycles were recorded near continuously for over a year.

Isopods in carious colonies, with a variety of climate conditions. (note: all isopods are stored in specially modified containers to ensure proper air flow, moisture, and colonies are split or released if the population within a colony gets too large)

These experiments often form the basis of several prompts or image generations, including as parts of training data where I create want to explore the aesthetic of rot, decay, and subterranean networks.

Experiments

Pixels, Points, and Splats

Working with digital tools in two- and three-dimensional spaces has lead me to develop novel ways of interacting with these media.

I've used AI to develop code which can manipulate images and 3D models by indexing and recognizing values of each unit - a pixel, point, or splat, allowing me to recompose each set as a generated or transformed collage, or simply to manipulate the location of the individual units.

Pixel Manipulations

Examples of images created using base data sets of satellite images, IR photography, Multispectral Photography, and photographs. Pixels are manipulated using Python scripts created iteratively using AI.

Using python code with built-in edge-detection algorithms, I can manipulate base images of Bayan Obo Mine (lower left) and Deonar Dumping Grounds (lower right).

The script automatically creates a series of images using pixels which have been manipulated through various spatial mathematical algrorithms

Another python code created with AI indexes "strips" of pixels within photographs, and stitches them in sequences defined by similar mathematical, spatial algorithms

The generated images are then used in a further generative process by training styles or models in Fuser, ComfyUI, or Midjourney to create pseudo-real images.

Point Manipulations

Through LiDAR, Photogrammetry and 3D scanning, we're able to create dense pointclouds which capture three-dimensional space.

I used AI-generated scripts to manipulate and distort these 3D-pointclouds allowing me to create projections and flat "flattened" point cloud images, used largely in the Altadena Fire Scans Project as a part of SCI-Arc.

The Altadena Scans Project is a collaborative effort between the residents of Altadena, LACMA, Students and Faculty at SCI-Arc, with support from Scanlab Projects (UK) and a team of producers - Joel Feree, Sara Simon, Ade Ayoade, Natalie Rubio, Kahin Vasi, Marti Vera, Carlos Bonachea, Kai Johnson, Jillian Leedy, Sophie Pennetier, Pierce Meyers, and Matt Shaw - with the support of the SCI-Arc Resilient Futures Task Force, LACMA Art & Technology Lab, ScanLAB Projects, PT Capture, and The Little Things AI.

To learn more about this project, you can reach out to me at the email address at the end of this page.

Photographs of the Altadena Scans exhibition at SCI-Arc in October 2025

An elevational profile of the six homes scanned after the Eaton Fire as part of the process of visualizing the effects of the fire. Created using python, AutoDesk ReCap, Photoshop, and Metashape

Splat Manipulations

New technology around 3D Gaussian Splatting (3DGS) has allowed more use cases of image-to-3D modelling to come up.

Similar to point clouds, each "splat" has a vector and a color/light value which can be manipulated. These can be done recursively with python scripts; however, we can also use ML-Run scripts to scatter or manipulate Gaussians. These models are far more lightweight than pointclouds are, making them easier to process computationally.

Interactive splats of the Rainforest dome at Gardens by the Bay and a small terrarium: You can zoom, enter and pan around the splat.

This splat was created with Inria's original source code from Github, and edited using SuperSplat's editors.

Custom python code created using Claude trained on the research/mathematics of Gaussian Splatting to create

a blended collage of splats.

Early tests of the blended splat: multiple splats can be seen within a single gaussian scene.

As with the point clouds, these splats are manipulated by indexing and transforming the data contained within three-dimensional point geometry.